The MAIN OBJECTIVES OF THE RESEARCH PROGRAMME are: to train, by research and by example, 15 Early Stage Researchers in the field of UQ and Optimisation and to become leading independent researchers and entrepreneurs that will increase the innovation capacity of the EU, and to equip them with the skills needed for successful careers in academia and industry, to develop, through the ESR’s individual projects, fundamental mathematical methods and algorithms to bridge the gap between Uncertainty Quantification and Optimisation and between Probability Theory and Imprecise Probability Theory for Uncertainty Quantification, and to efficiently solve high-dimensional, expensive and complex engineering problems.

The research programme is divided into three main Work Packages (WP) covering three fundamental areas of research underpinning the OUU of any system or process: WP1-Modelling, Simulation and VP, WP2- Uncertainty Quantification, WP3-Optimisation Under Uncertainty. Figure 1.1 gives an overview of the logic and interrelations of the research programme. Each main WP is further broken down into a number of specific sub- WPs (see Table 1.1) on key topics, each with a set of high level research objectives. The overall research objective of WP1 is to define a set of representative reference case studies to support the development and benchmarking of the UQ and OUU techniques developed in the other WPs. The research objective of WP2 is to develop techniques for the efficient treatment of uncertainty of different nature while the objective of WP3 is to integrate optimisation and uncertainty quantification.

The research programme has four major innovative aspects: 1- the use of Imprecise Probability Theories for Uncertainty Quantification and Optimisation Under Uncertainty, 2- the integration of UQ and optimisation for large scale expensive problems, 3- the introduction of evolvable OUU and 4- the development of distributed computing techniques for multidisciplinary design in a peer to peer architecture, where delay and corruption of information are simulated, and expert judgments and subject probabilities in the design process are introduced. The last aspect is particularly important when trying to optimise long term processes normally divided in multiple phases or stages.

In the following, the state-of- the-art and innovative contributions will be presented per sub-WP.

WP1.1 MULTIDISCIPLINARY MODEL-BASED COLLABORATIVE SYSTEM ENGINEERING The coordination WP1.2 MULTI-FIDELITY MODELLING Lead Partner – Politecnico di Milano WP2.1 IMPRECISE PROBABILITIES FOR UQ Imprecise Probability Theory (IPT) generalises Probability Lead Partner – University of Durham WP2.2 HIGH DIMENSIONAL UNCERTAINTY PROPAGATION WP2.3 UQ IN EXPERIMENTAL ANALYSIS AND MODEL VALIDATION Experiments Lead Partner – Von Karman Institute for Fluid Dynamics WP2.4 ELICITATION AND AGGREGATION OF STRUCTURED EXPERT JUDGEMENT WP3.1 WORST-CASE SCENARIO AND MULTI-LEVEL OPTIMISATION In WP3.2 HYBRID MANY-OBJECTIVE OPTIMISATION OF LARGE SCALE EXPENSIVE PROBLEMS Lead Partner – Technische Hochschule Köln WP3.3 EVIDENCE-BASED ROBUST OPTIMISATION AND DECISION MAKING (EBRO) WP3.4 RELIABILITY-BASED DESIGN OPTIMISATION (RBDO) is an open WP3.5 EVOLVABLE OPTIMISATION UNDER UNCERTAINTY A number

Objective: To study and implement new collaborative technologies applicable to complex multidisciplinary, multi-phase design processes over a network of multiple, geographically distributed, organisations using shared computing resources on HPCs or clouds. between interdisciplinary groups working on the optimisation of complex systems and processes, particularly in the area of multidisciplinary engineering design, is an important open research topic with direct industrial applications. To respond to industry, optimisation need to handle multiple objectives and constraints defined in different disciplines and efficiently integrate CAD/CAE software tools, collaborative optimisation platforms such ESTECO enterprise suite have been recently developed. For large-scale industrial multidisciplinary design optimisation, the different disciplines can be defined by different, wide-spread groups of people, making collaborative optimisation a real challenge from a computation point of view. MBSE is an emerging branch of system engineering, which addresses the problems associated with the management of complex systems. It aims at formalising the application of modelling to support system requirements, design, analysis, verification/validation activities starting from the conceptual design phase and continuing through to the later life cycle phases of a project. The aerospace sector represents an ideal application field due to the high system complexity involved. The innovative aspect of WP1.1 is the integration of the MBSE framework with state-of-the-art distributed MDO platforms for collaborative optimisation, uncertainty treatment in multi-phase design processes over complex and distributed, peer-to-peer networks sharing software and computing resources, models, data, knowledge base on HPCs, grid or clouds in order to improve process and product reliability and enhance efficiency through design automation. More importantly WP1.1 will introduce a model of the process itself, with the associated uncertainty that will be studied in WP3.6. The expected result will be a paradigm for robust system architectures that codifies the end-product life-cycle from the earliest design phases.

between interdisciplinary groups working on the optimisation of complex systems and processes, particularly in the area of multidisciplinary engineering design, is an important open research topic with direct industrial applications. To respond to industry, optimisation need to handle multiple objectives and constraints defined in different disciplines and efficiently integrate CAD/CAE software tools, collaborative optimisation platforms such ESTECO enterprise suite have been recently developed. For large-scale industrial multidisciplinary design optimisation, the different disciplines can be defined by different, wide-spread groups of people, making collaborative optimisation a real challenge from a computation point of view. MBSE is an emerging branch of system engineering, which addresses the problems associated with the management of complex systems. It aims at formalising the application of modelling to support system requirements, design, analysis, verification/validation activities starting from the conceptual design phase and continuing through to the later life cycle phases of a project. The aerospace sector represents an ideal application field due to the high system complexity involved. The innovative aspect of WP1.1 is the integration of the MBSE framework with state-of-the-art distributed MDO platforms for collaborative optimisation, uncertainty treatment in multi-phase design processes over complex and distributed, peer-to-peer networks sharing software and computing resources, models, data, knowledge base on HPCs, grid or clouds in order to improve process and product reliability and enhance efficiency through design automation. More importantly WP1.1 will introduce a model of the process itself, with the associated uncertainty that will be studied in WP3.6. The expected result will be a paradigm for robust system architectures that codifies the end-product life-cycle from the earliest design phases.

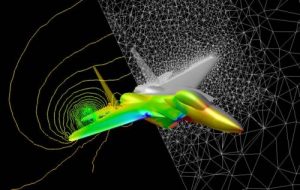

Objective: To define and develop set of multi-fidelity models covering key applications in space and aerospace. Seven specific aerospace applications will be used to assess the suitability of the approaches developed in UTOPIAE: I) design of an anti-icing system, II) re-entry of space vehicles and debris, III) energy-driven design of civil airplanes, IV) multi-sensor tracking of objects during re-entry, V) ATM with Federated Satellite Systems, VI) morphing of rotor blades and VII) end-to-end design of space systems.

Seven specific aerospace applications will be used to assess the suitability of the approaches developed in UTOPIAE: I) design of an anti-icing system, II) re-entry of space vehicles and debris, III) energy-driven design of civil airplanes, IV) multi-sensor tracking of objects during re-entry, V) ATM with Federated Satellite Systems, VI) morphing of rotor blades and VII) end-to-end design of space systems.

In-flight icing modelling is affected by a number of uncertainties, both epistemic and aleatoric, that currently prevent its application to the design and the certification phases of fixed- and rotary-wing aircraft; existing processes require costly experimental verification and/or in-flight testing. The air transport industry is facing very hard challenges to accomplish the objectives of Horizon 2020/2050 programs. In particular, the reduction of CO2 emissions is a very strong motivation for an energy-driven systemic approach to the design of the aircraft and operations. In general, greener and more efficient technologies and overall design and manufacturing processes are required for future aerospace vehicles. The modelling of morphing structures is affected by diverse epistemic and aleatoric uncertainties due to the complex interaction of the morphing structure with the overall aircraft structure and aerodynamics. The prediction of the re-entry trajectory and footprint of a space object is an extremely challenging task that becomes exacerbated in case of fragmentation. An open question is also tracking these objects using multiple stations providing heterogeneous observations. Doing vehicle tracking using available satellite services is a novelty in itself and would provide an unprecedented capability to track vehicles also in the absence of beacons, in the case of disasters or illegal situations (such has smuggling or hijacking). Last but not least end-to-end space systems design is an example of process with evolvable requirements and objectives. Multi-fidelity approaches can be used to reduce the computational cost of providing effective solutions to the design and control of these systems and processes. In the multi-fidelity approach, low- and high-fidelity models of the same system are considered and opportunely scheduled during the process to produce reliable results at the cheapest computational cost. An example of multi-fidelity in optimisation is the Approximation and Model Management Optimisation while techniques like multi-fidelity evolution control are used to schedule the fidelity levels to make the process efficient. Recently a fundamentally different approach was proposed using the tools of estimation theory to fuse together information from multi-fidelity analyses, resulting in a Bayesian-based approach to mitigating risk in complex system design and analysis. WP1.2 will develop multi-fidelity models for all the above-mentioned applications. The innovative aspects will be to investigate and characterise the uncertainty that comes with each level of model fidelity for all seven applications. Furthermore, icing models will be carried out for the first time from fundamental physics and experimental data. Models used in WP1.2 are already validated or, in the case of the icing-accretion and re-entry models, will be validated against available and known experimental results. More techniques on model validation can be found in WP2.3.

ESRs 2,5, 10, 15

Objective: To look into the use of the most general representation of uncertainty using IP. Theory to allow for partial probability specifications, or in other words, incomplete, vague and conflicting information. In all cases, a single unique probability distribution may be difficult to define or might be inappropriate to completely capture the nature of uncertainty. Quantification of uncertainty is generally done using probability distributions, usually satisfying Kolmogorov’s axioms and the machinery of Probability Theory. Many scholars, from Laplace to de Finetti, Ramsey, Cox and Lindley argue that this is the only possible representation of uncertainty. However, this has not been unanimously accepted by scientists, statisticians, and probabilists. IPT includes a number of hierarchical extensions to Probability Theory. Dezert-Smarandache Theory (DSmT) of paradoxical reasoning (and the extension of DST to Evidence) has been used in target tracking and state estimation, robust Bayesian methods have been proposed for situations in which model uncertainty is hard to quantify due to lack of data, and, therefore, information on a system or process comes from expert knowledge and experience. IPT have been applied to study transition probabilities in (hidden) Markov chains that led to important applications in state estimation, filtering and control. WP2.1 will propose a whole range of innovative approaches based on Imprecise Probabilities and related implications such as information fusion, aggregation rules and interpretation of the results will be considered.

Theory to allow for partial probability specifications, or in other words, incomplete, vague and conflicting information. In all cases, a single unique probability distribution may be difficult to define or might be inappropriate to completely capture the nature of uncertainty. Quantification of uncertainty is generally done using probability distributions, usually satisfying Kolmogorov’s axioms and the machinery of Probability Theory. Many scholars, from Laplace to de Finetti, Ramsey, Cox and Lindley argue that this is the only possible representation of uncertainty. However, this has not been unanimously accepted by scientists, statisticians, and probabilists. IPT includes a number of hierarchical extensions to Probability Theory. Dezert-Smarandache Theory (DSmT) of paradoxical reasoning (and the extension of DST to Evidence) has been used in target tracking and state estimation, robust Bayesian methods have been proposed for situations in which model uncertainty is hard to quantify due to lack of data, and, therefore, information on a system or process comes from expert knowledge and experience. IPT have been applied to study transition probabilities in (hidden) Markov chains that led to important applications in state estimation, filtering and control. WP2.1 will propose a whole range of innovative approaches based on Imprecise Probabilities and related implications such as information fusion, aggregation rules and interpretation of the results will be considered.

ESRs 1, 8, 9, 14

Objective: To develop and improve techniques to reduce the computational cost to derive accurate uncertainty quantifications, both using Probability and Imprecise Probability theories. Uncertainty propagation is affected by the so-called “Curse of Dimensionality”: as the dimension of the uncertain space increases the computational cost increases exponentially for the same quality of the solution. Several techniques have been proposed, though all with drawbacks. A weighted function space-based quasi-Monte Carlo method has been recently proposed though this method remains slow when the number of effective dimensions is much less than the total. Another well-known technique is based on High-Dimensional Model Representation that can detect the interactions between different uncertainties by decomposing the problem into a series of low-dimensional additive functions. This approach can be expensive and very sensitive to the choice of the anchor point. A technique using a priori and a posteriori analysis based anisotropic sparse grid construction has been shown to be more efficient than the isotropic sparse grid, even if interactions among different dimensions are not well captured. All the proposed numerical algorithms will need to be extended to work on parallel machines in a High Performance Computing (HPC) framework. The method and algorithm need to be scalable and the implementation needs to consider that both the deterministic model and the uncertainty model can be parallelised. Model reduction mitigates the curse of dimensionality by selecting only the most important groups of parameters or the coupling between them. A number of model reduction techniques are based on an appropriate projection of the uncertain space, e.g. using Proper Orthogonal Decomposition (POD), Analysis Of Variance (ANOVA) or High-Dimensional Model Representation (HDMR). In the case of Imprecise Probabilities (IP), techniques have been proposed to reduce the number of uncertain parameters or uncertain intervals associated to each parameter. More recently an innovative decomposition technique, called H-decomposition, was developed to efficiently apply Evidence Theory to weakly coupled systems and a novel HDMR approach was developed for the automatic detection of parameter coupling. UTOPIAE will study the impact of a reduced order model on the processes of robust and reliability based optimisation, an area still not sufficiently explored, and will extend and generalise anchored-ANOVA, adaptive HDMR and H-decomposition techniques.

Uncertainty propagation is affected by the so-called “Curse of Dimensionality”: as the dimension of the uncertain space increases the computational cost increases exponentially for the same quality of the solution. Several techniques have been proposed, though all with drawbacks. A weighted function space-based quasi-Monte Carlo method has been recently proposed though this method remains slow when the number of effective dimensions is much less than the total. Another well-known technique is based on High-Dimensional Model Representation that can detect the interactions between different uncertainties by decomposing the problem into a series of low-dimensional additive functions. This approach can be expensive and very sensitive to the choice of the anchor point. A technique using a priori and a posteriori analysis based anisotropic sparse grid construction has been shown to be more efficient than the isotropic sparse grid, even if interactions among different dimensions are not well captured. All the proposed numerical algorithms will need to be extended to work on parallel machines in a High Performance Computing (HPC) framework. The method and algorithm need to be scalable and the implementation needs to consider that both the deterministic model and the uncertainty model can be parallelised. Model reduction mitigates the curse of dimensionality by selecting only the most important groups of parameters or the coupling between them. A number of model reduction techniques are based on an appropriate projection of the uncertain space, e.g. using Proper Orthogonal Decomposition (POD), Analysis Of Variance (ANOVA) or High-Dimensional Model Representation (HDMR). In the case of Imprecise Probabilities (IP), techniques have been proposed to reduce the number of uncertain parameters or uncertain intervals associated to each parameter. More recently an innovative decomposition technique, called H-decomposition, was developed to efficiently apply Evidence Theory to weakly coupled systems and a novel HDMR approach was developed for the automatic detection of parameter coupling. UTOPIAE will study the impact of a reduced order model on the processes of robust and reliability based optimisation, an area still not sufficiently explored, and will extend and generalise anchored-ANOVA, adaptive HDMR and H-decomposition techniques.

Objective: To study optimal techniques to design new experiments, improve the robustness of the numerical simulation and validate the simulation models. incur two basic kinds of uncertainty: systematic, reproducible errors affecting the whole experiment, and random uncertainties associated with intrinsic variations in the experimental conditions, in the sensor readings or deficiencies in defining the quantity being measured. Not considering the statistical variability, stemming from random uncertainties, when validating a numerical model can lead to erroneous conclusions. At the same time one is interested in capturing discrepancies in the model it-self, generally evidenced by a bias, or in guiding the experiments to fully validate the modelled components or characterise the unmodelled ones. While several techniques exist to numerically propagate experimental data, two open questions remain: how to use numerical simulations to improve experiments, and how experimental data can be used to improve models and fully characterise model uncertainty. The general starting assumption is that sensors and measurement models are well understood so that validation is possible. In UTOPIAE, a characterisation of model uncertainties will be performed using techniques for capturing scattered data, and used to validate the numerical tool. The novelty in WP2.3 is that appropriate discrepancy functions will be introduced where uncertainty in model parameters are not sufficient to fit the model to the experiments and Bayesian inference is used to characterised the uncertainty in model parameters from experiments. Furthermore, a new technique based on Bayesian and Robust Bayesian inference will be applied to determine new operating conditions in the experiment to reduce uncertainty in the simulation model in the case sensor and measurement models are not well known. Once uncertainty in the model is fully characterised, the predictions from the simulations will be used to update the design of the experiments.

incur two basic kinds of uncertainty: systematic, reproducible errors affecting the whole experiment, and random uncertainties associated with intrinsic variations in the experimental conditions, in the sensor readings or deficiencies in defining the quantity being measured. Not considering the statistical variability, stemming from random uncertainties, when validating a numerical model can lead to erroneous conclusions. At the same time one is interested in capturing discrepancies in the model it-self, generally evidenced by a bias, or in guiding the experiments to fully validate the modelled components or characterise the unmodelled ones. While several techniques exist to numerically propagate experimental data, two open questions remain: how to use numerical simulations to improve experiments, and how experimental data can be used to improve models and fully characterise model uncertainty. The general starting assumption is that sensors and measurement models are well understood so that validation is possible. In UTOPIAE, a characterisation of model uncertainties will be performed using techniques for capturing scattered data, and used to validate the numerical tool. The novelty in WP2.3 is that appropriate discrepancy functions will be introduced where uncertainty in model parameters are not sufficient to fit the model to the experiments and Bayesian inference is used to characterised the uncertainty in model parameters from experiments. Furthermore, a new technique based on Bayesian and Robust Bayesian inference will be applied to determine new operating conditions in the experiment to reduce uncertainty in the simulation model in the case sensor and measurement models are not well known. Once uncertainty in the model is fully characterised, the predictions from the simulations will be used to update the design of the experiments.

ESRs 3, 6, 9, 10

Objective: To define protocols for the elicitation and aggregation of expert judgement in multi-phase decision processes. In many decision problems, data on future events is unavailable and structured expert judgement is required to capture the epistemic uncertainty and subjective probability assignment that exist in human opinions. In expert judgement, probabilities are subjective in nature and can be represented by a degree of belief rather than a probability distribution. In a framework, this belief needs to be encoded into a probability distribution that might not be supported by enough data. IP theories, like DST, can provide an alternative framework for subjective beliefs. Epistemic uncertainty can be quantified as propositions or intervals, and a basic belief mass is assigned to them without the need to infer an actual distribution. To date, there has been insufficient literature published on the evaluation of IP elicitation and associated challenges, e.g., fusing multiple experts. Eliciting expert judgement is typically laborious and the extent to which the process can be automated using technology has not been investigated in detail. In addition, there are many papers applying mathematical models to aggregate expert input, however, current mathematical models and behavioural models for combining judgements from multiple experts do not have all the desired mathematical properties. For example, Bayesian methodologies provide a mechanism for aggregating multiple experts but pose philosophical challenges in how to interpret the output. The innovation in UTOPIAE will be to use probabilistic and IP approaches to derive reliable quantification. WP2.4 will introduce two innovative aspects: elicitation and data fusion using IP theories in engineering design is a first, furthermore, incorporating this aspect in the optimisation of a system or process is essential but has never been investigated before. The current use of Probability Theory in OUU might limit the treatment of epistemic uncertainty and subjective probabilities, thus UTOPIAE will introduce IPT as part of OUU, an advancement that can lead to a distinctive edge for Europe.

In many decision problems, data on future events is unavailable and structured expert judgement is required to capture the epistemic uncertainty and subjective probability assignment that exist in human opinions. In expert judgement, probabilities are subjective in nature and can be represented by a degree of belief rather than a probability distribution. In a framework, this belief needs to be encoded into a probability distribution that might not be supported by enough data. IP theories, like DST, can provide an alternative framework for subjective beliefs. Epistemic uncertainty can be quantified as propositions or intervals, and a basic belief mass is assigned to them without the need to infer an actual distribution. To date, there has been insufficient literature published on the evaluation of IP elicitation and associated challenges, e.g., fusing multiple experts. Eliciting expert judgement is typically laborious and the extent to which the process can be automated using technology has not been investigated in detail. In addition, there are many papers applying mathematical models to aggregate expert input, however, current mathematical models and behavioural models for combining judgements from multiple experts do not have all the desired mathematical properties. For example, Bayesian methodologies provide a mechanism for aggregating multiple experts but pose philosophical challenges in how to interpret the output. The innovation in UTOPIAE will be to use probabilistic and IP approaches to derive reliable quantification. WP2.4 will introduce two innovative aspects: elicitation and data fusion using IP theories in engineering design is a first, furthermore, incorporating this aspect in the optimisation of a system or process is essential but has never been investigated before. The current use of Probability Theory in OUU might limit the treatment of epistemic uncertainty and subjective probabilities, thus UTOPIAE will introduce IPT as part of OUU, an advancement that can lead to a distinctive edge for Europe.

Objective: To develop algorithms and methods for worst-case and/or multi-level optimisation. worst-case scenario optimisation one seeks for the best performance under the worst-case conditions. Worst-case design is important whenever robustness to adverse environmental conditions should be ensured regardless of their probability, since there cannot be uncertainty without a set of possible uncertainty outcomes. This leads to optimisation, where the solution is found by minimising the maximum output of all possible scenarios (min-max optimisation), while implementing the multi-level approach that uses different-accuracy measures for analysis/evaluation tools with various levels of complexity. Most optimisation techniques are based on Lipschitz optimisation algorithms, global optimisation algorithms for min-max optimisation via relaxation and Kriging. Recently some heuristic methods have been used, e.g., particle swarm optimisation, genetic or evolutionary algorithms, differential evolution and game theory. This WP will create a fundamental building block to solve Evidence-Based Robust Optimisation and Reliability Based Optimisation in WP3.4 and 3.5.

worst-case scenario optimisation one seeks for the best performance under the worst-case conditions. Worst-case design is important whenever robustness to adverse environmental conditions should be ensured regardless of their probability, since there cannot be uncertainty without a set of possible uncertainty outcomes. This leads to optimisation, where the solution is found by minimising the maximum output of all possible scenarios (min-max optimisation), while implementing the multi-level approach that uses different-accuracy measures for analysis/evaluation tools with various levels of complexity. Most optimisation techniques are based on Lipschitz optimisation algorithms, global optimisation algorithms for min-max optimisation via relaxation and Kriging. Recently some heuristic methods have been used, e.g., particle swarm optimisation, genetic or evolutionary algorithms, differential evolution and game theory. This WP will create a fundamental building block to solve Evidence-Based Robust Optimisation and Reliability Based Optimisation in WP3.4 and 3.5.

Objective: To improve existing and develop new techniques for handling expensive many-objective optimisation problems in aerospace applications with mixed discrete and continuous decision variables. Many-objective optimisation was introduced to study problems with more than 3 objectives where state-of-the-art multi-objective techniques often fail. The Pareto-dominance, which requires better or equal performance in all objectives, fails in higher dimensional objective spaces because more and more solutions become incomparable. Techniques considering the dominated hypervolume have a computational cost that is exponentially increasing with the number of objectives. Alternative approaches are rather specialised and have not been considered frequently for relevant applications. Another method is to reduce the dimension in objective space. When objective functions and constraints are expensive to evaluate many-objective optimisation becomes even more challenging. Surrogate models can be considered for optimisation based on computationally cheaper models and transfer the results to the original large scale expensive problems. This has been done only rarely for many-objective optimisation to date, but is potentially applicable to expensive problems of industrial interest. Combinatorial and Mixed-Integer Nonlinear Optimisation techniques are essential when systems and processes are integrated (e.g., integrated aircraft and operation design). Many combinatorial optimisation problems are naturally modelled using integer programming techniques, and classical techniques such as using valid inequalities and defining stronger reformulations have shaped this area for many decades. Mixed-Integer Nonlinear Programming (MINLP) offers natural ways of modelling for complicated real-life problems. MINLP has benefited from „smart‟ approximations such as outer approximation, however, the use of sophisticated heuristics offer, generally, more efficient solutions. In case of evolutionary computation, combinatorial as well as mixed-integer problems are handled the same way. State-of-the-art methods use hierarchical or simultaneous approaches. While the first implement a higher-level optimisation problem for the discrete part and a subproblem for the continuous variables, the second treats parameters of discrete and continuous type simultaneously. In different problem domains, meta-model assisted evolutionary approaches have already been applied to mixed-integer optimisation problems. With respect to combinatorial optimisation, different variants of evolutionary algorithms have been developed. UTOPIAE will investigate methods to deal with many-objective optimisation both in the case of cheap and expensive objective and constraint evaluations with mixed (hybrid) variables, continues and discrete. UTOPIAE will combine mathematical theories developed in the areas of combinatorial optimisation and nonlinear programming and, starting from existing algorithms, will develop enhanced optimisation algorithms for the efficient optimisation of many-objective mixed-integer nonlinear design problems.

Many-objective optimisation was introduced to study problems with more than 3 objectives where state-of-the-art multi-objective techniques often fail. The Pareto-dominance, which requires better or equal performance in all objectives, fails in higher dimensional objective spaces because more and more solutions become incomparable. Techniques considering the dominated hypervolume have a computational cost that is exponentially increasing with the number of objectives. Alternative approaches are rather specialised and have not been considered frequently for relevant applications. Another method is to reduce the dimension in objective space. When objective functions and constraints are expensive to evaluate many-objective optimisation becomes even more challenging. Surrogate models can be considered for optimisation based on computationally cheaper models and transfer the results to the original large scale expensive problems. This has been done only rarely for many-objective optimisation to date, but is potentially applicable to expensive problems of industrial interest. Combinatorial and Mixed-Integer Nonlinear Optimisation techniques are essential when systems and processes are integrated (e.g., integrated aircraft and operation design). Many combinatorial optimisation problems are naturally modelled using integer programming techniques, and classical techniques such as using valid inequalities and defining stronger reformulations have shaped this area for many decades. Mixed-Integer Nonlinear Programming (MINLP) offers natural ways of modelling for complicated real-life problems. MINLP has benefited from „smart‟ approximations such as outer approximation, however, the use of sophisticated heuristics offer, generally, more efficient solutions. In case of evolutionary computation, combinatorial as well as mixed-integer problems are handled the same way. State-of-the-art methods use hierarchical or simultaneous approaches. While the first implement a higher-level optimisation problem for the discrete part and a subproblem for the continuous variables, the second treats parameters of discrete and continuous type simultaneously. In different problem domains, meta-model assisted evolutionary approaches have already been applied to mixed-integer optimisation problems. With respect to combinatorial optimisation, different variants of evolutionary algorithms have been developed. UTOPIAE will investigate methods to deal with many-objective optimisation both in the case of cheap and expensive objective and constraint evaluations with mixed (hybrid) variables, continues and discrete. UTOPIAE will combine mathematical theories developed in the areas of combinatorial optimisation and nonlinear programming and, starting from existing algorithms, will develop enhanced optimisation algorithms for the efficient optimisation of many-objective mixed-integer nonlinear design problems.

ESRs 2, 5, 12, 13

Objective: To extend the computational optimisation framework developed to make evidence-based robust optimisation (EBRO) efficient on high dimensional problems with the inclusion of expert opinions. Dempster-Shafer theory or Evidence Theory (ET) is a branch of mathematics on uncertain reasoning that allows the decision-maker to deal with uncertain events, and incomplete or conflicting information. ET is a generalisation of classical probability and possibility theory used mainly in information fusion, decision-making, risk analysis, autonomy, intelligent systems and planning & scheduling under uncertainty. Recently, ET was considered for applications in the robust design of structures and mechanisms in aerospace and civil engineering, such as reusable launchers, aerocapture manoeuvres and low-thrust trajectories. Concepts of robustness and robust design optimisation based on DST using gradient methods in combination with response surfaces were first proposed in early 2000. Bauer proposed different techniques to reduce the computational complexity in the computation of the cumulative Belief supporting a given proposition. Computational frame-works have been developed to efficiently use ET in high dimensional model-based system optimisation. Starting from DST as paradigmatic example of Imprecise Probabilities, WP3.3 will extend the computational optimisation framework developed by Vasile et al. to the use of Coherent Upper and Lower Previsions in system design and process control.

Dempster-Shafer theory or Evidence Theory (ET) is a branch of mathematics on uncertain reasoning that allows the decision-maker to deal with uncertain events, and incomplete or conflicting information. ET is a generalisation of classical probability and possibility theory used mainly in information fusion, decision-making, risk analysis, autonomy, intelligent systems and planning & scheduling under uncertainty. Recently, ET was considered for applications in the robust design of structures and mechanisms in aerospace and civil engineering, such as reusable launchers, aerocapture manoeuvres and low-thrust trajectories. Concepts of robustness and robust design optimisation based on DST using gradient methods in combination with response surfaces were first proposed in early 2000. Bauer proposed different techniques to reduce the computational complexity in the computation of the cumulative Belief supporting a given proposition. Computational frame-works have been developed to efficiently use ET in high dimensional model-based system optimisation. Starting from DST as paradigmatic example of Imprecise Probabilities, WP3.3 will extend the computational optimisation framework developed by Vasile et al. to the use of Coherent Upper and Lower Previsions in system design and process control.

Objective: To study and develop new techniques for large scale constrained RBDO problems. research field. Many techniques have been introduced in the last ten years, from modified sampling techniques for the estimates of failure probabilities, to the use of neural networks or support vector machines, or the formalisation of different optimisation strategies (single or double loops, sequential techniques) and the use of evolutionary algorithms. Two major bottlenecks are represented by the applicability of some approaches to large scale problems with several uncertain variables and the high computational cost when time-consuming simulations are used to evaluate the limit state functions. In WP3.4, UTOPIAE will tackle a number of interesting open points: the relationship between RBDO and robust design optimisation (RDO), the investigation of new reliability measures and their application in multi-objective optimisation under uncertainties, the use of evolutionary algorithms to solve RBDO and RDO problems, and the efficient treatment of multi-constrained problems or non-linear state functions, the treatment of uncertain experimental data with unknown probability density functions, the treatment of dependent non-normal variables (both in reliability analysis and in RDO) and the application of response surface method techniques to large scale RBDO problems.

research field. Many techniques have been introduced in the last ten years, from modified sampling techniques for the estimates of failure probabilities, to the use of neural networks or support vector machines, or the formalisation of different optimisation strategies (single or double loops, sequential techniques) and the use of evolutionary algorithms. Two major bottlenecks are represented by the applicability of some approaches to large scale problems with several uncertain variables and the high computational cost when time-consuming simulations are used to evaluate the limit state functions. In WP3.4, UTOPIAE will tackle a number of interesting open points: the relationship between RBDO and robust design optimisation (RDO), the investigation of new reliability measures and their application in multi-objective optimisation under uncertainties, the use of evolutionary algorithms to solve RBDO and RDO problems, and the efficient treatment of multi-constrained problems or non-linear state functions, the treatment of uncertain experimental data with unknown probability density functions, the treatment of dependent non-normal variables (both in reliability analysis and in RDO) and the application of response surface method techniques to large scale RBDO problems.

Objective: To use optimisation under uncertainty to optimise multi-phase processes with evolvable requirements. of processes evolve through different phases, each characterised by different objectives and constraints. The design process itself can evolve over a long time span during which requirements and specifications gradually become more clearly defined. Furthermore, the final product will have to be able to perform a multitude of tasks, which are often interrelated. The meaningful quantification of system reliability is important in the earlier design stages, when tasks are not fully identified and information is lacking. In the case of more classical single-phase processes (e.g., the control of the trajectory of an aircraft), measurements are used in combination with a model to update the state of the system and make decisions (e.g., in the case of Model Predictive Control). Measurements are generally affected by aleatory uncertainty, while models are affected by a combination of epistemic and aleatory uncertainties. In the case of multi-phase design and decision-making processes, expert knowledge is injected into the process at every phase, analogous to instrument measurements in state estimation. This knowledge can be incomplete and subjective, and requires a particular treatment. In both cases, the use of Imprecise Probabilities can help in the quantification of all type of uncertainties affecting the evolution of a process. The study of evolvable processes in the framework of IP represents a key novelty of UTOPIAE. System structure and functions will be represented as an imprecise probability. Lower and upper probabilities of system functioning will be approximated to treat realistic problems. Robust Bayesian approaches and IPT methods for estimating imprecise transition (and emission) probabilities for (hidden) stationary and non-stationary Markov models will be applied to the OUU of single- and multi-phase processes. WP3.5 will generalise IP-based reliability analysis of components or subsystems to that of systems and will upscale the basic theory to make it applicable to real-world problems for the first time.

of processes evolve through different phases, each characterised by different objectives and constraints. The design process itself can evolve over a long time span during which requirements and specifications gradually become more clearly defined. Furthermore, the final product will have to be able to perform a multitude of tasks, which are often interrelated. The meaningful quantification of system reliability is important in the earlier design stages, when tasks are not fully identified and information is lacking. In the case of more classical single-phase processes (e.g., the control of the trajectory of an aircraft), measurements are used in combination with a model to update the state of the system and make decisions (e.g., in the case of Model Predictive Control). Measurements are generally affected by aleatory uncertainty, while models are affected by a combination of epistemic and aleatory uncertainties. In the case of multi-phase design and decision-making processes, expert knowledge is injected into the process at every phase, analogous to instrument measurements in state estimation. This knowledge can be incomplete and subjective, and requires a particular treatment. In both cases, the use of Imprecise Probabilities can help in the quantification of all type of uncertainties affecting the evolution of a process. The study of evolvable processes in the framework of IP represents a key novelty of UTOPIAE. System structure and functions will be represented as an imprecise probability. Lower and upper probabilities of system functioning will be approximated to treat realistic problems. Robust Bayesian approaches and IPT methods for estimating imprecise transition (and emission) probabilities for (hidden) stationary and non-stationary Markov models will be applied to the OUU of single- and multi-phase processes. WP3.5 will generalise IP-based reliability analysis of components or subsystems to that of systems and will upscale the basic theory to make it applicable to real-world problems for the first time.